Hi there!

Just recently there was an interesting performance issue raised in the WPT Forum that served really well as a showcase for the rich debugging capabilities of WPT and I would focus in this Blog Post on Transfer-Encoding: Chunked.

Transfer-Encoding: Chunked was defined in the HTTP1.1 RFC and can be a nice performance improvement regarding Time-to-Render.

In HTTP1.0 the default behaviour of Browser-Server interaction was to a establish a TCP Connection to a Webserver using TCP’s well-known 3-way-handshake. After the Connection was established, the Browser fired its GET-Request and waited for the response. The requested object was sent down the wire from the Server to the Browser, and as soon as all Bytes were transmitted, the Server closed the TCP Connection. The Browser then would establish a new TCP connection to request the next object and so on.

With HTTP1.1 so-called persistent connections became the default, meaning that a Browser could request sequentially, one after another, multiple objects on a single TCP connection (though it would have to wait for each request to be fulfilled, before it could request the next object. Otherwise it would be Pipelining).

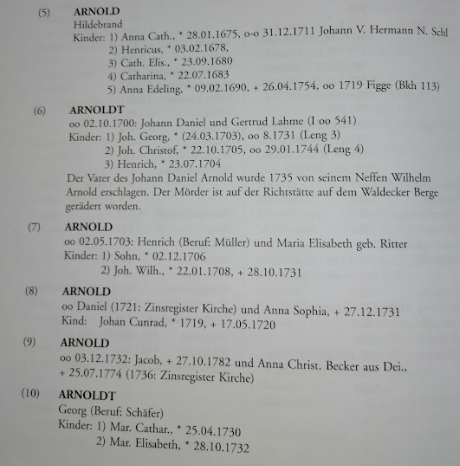

Now looking at performance a transportation method was defined in HTTP1.1 called Transfer-Encoding: Chunked. Reason for this was, that sometimes the Werbserver would have already a couple of Bytes to be sent, but would not know yet the total length (size) of the whole answer. Due to this the Webserver would have to wait until the last Byte is served to him, so the Webserver could write the correct Content-Length Header of the full answer into the HTTP Header.

Otherwise, how would the Browser be able to know, that the received answer is complete, and be allowed to send the next request on this very same TCP connection?

Transfer-Encoding: Chunked solves this problem. It tells the Browser basically: “Here are the first Bytes to your request, but more will come. I do not know yet how many, but I will mark the ending of the Byte stream with a defined marker”. How this exactly is marked can be seen in the above linked RFC. And the performance improvement is, that the Browser can already “work” with this partial answer to start rendering or request other objects. How to use this technique best is described here by Steve Souders.

So after we have now some basic understanding of the Why and How of Transfer-Encoding: Chunked let’s get to the issue of this specific site:

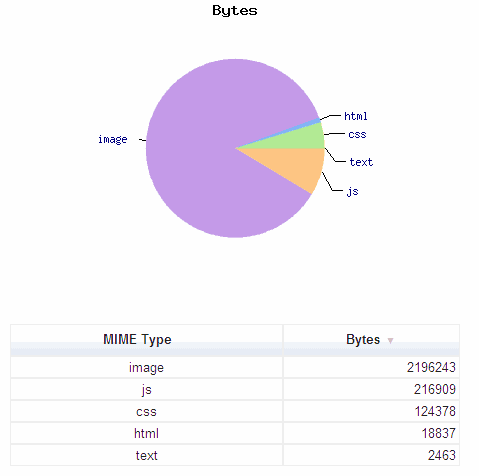

In the waterfall below you can see, how this website was loading. You see, that the base page, the HTML document, is loading for a very slow 15 seconds.

What might be the reason? I did repeat the test a couple of times to make sure this wasn’t a single packet being lost. But the pattern remained stable.

What might be the reason? I did repeat the test a couple of times to make sure this wasn’t a single packet being lost. But the pattern remained stable.

As you can see in the bandwidth utilization below the waterfall, it is most definitely not a bandwidth issue. Most of the time the bandwidth consumption is close to zero. And also you see by the blue bar, that the content started to come down very early, but it took a REALLY long time to complete.

So, clicking at the object will tell us a little bit more about it:

First you see the size of the object. A small 3.3 KByte. So the reason for the long transfer time is not the size of the object. And again, that the first Bytes of the object arrived under 1 second, but it took 15 seconds for the full answer.

Additionally you see, that the Browser was allowing the content to be gezipped, and the Server indeed not only gezipped it, but also applied Transfer-Encoding: Chunked.

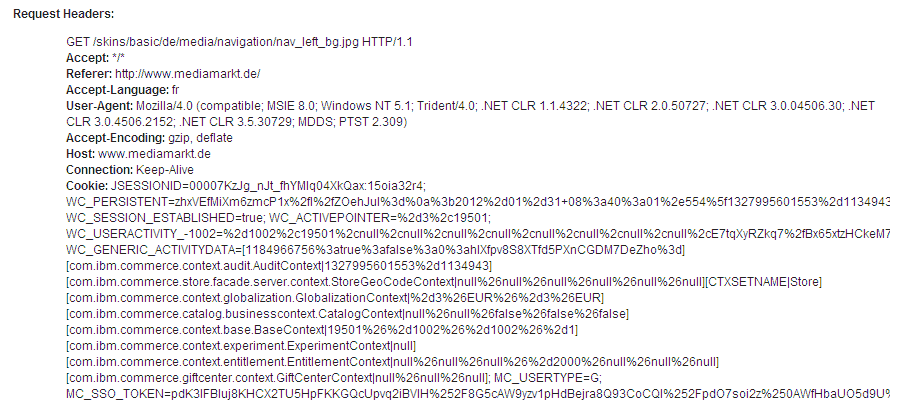

So, how can you dig deeper into this? A good idea is using the feature TCPDump of WPT, which allows you to see each and every Byte and its timing on the wire. So when doing this, you get the following picture:

Now you get some more information, of what the issue is. You can see in the middle pane, that the answer consisted of 3 chunks, whereof the first 2 chunks arrived almost instantly (Frame 8 and 28), and then, after 15 seconds, you received the rest of 444 Bytes in Frame 242. So it seems, that the beginning of the object could be sent very fast, but then the webserver had to wait for 15 seconds for the last part to be generated. Unfortunately, the content is gezipped, so you can’t see, what was the content of these last 444 Bytes. You can only guess, that somewhere in the last 15% of the document is the code causing the delay.

But fortunately enough there is Pat. Pat Meenan. He gave me the advise: You might want to look at the setHeader command from WPT’s scripting capabilities. Bingo! setHeader allows you to modify/override any of the Browsers GET-Request Headers. And that’s what you can do. Simply use the Script:

setHeader Accept-Encoding: None

navigate http://www.example.com

and your done!

Now when repeating this test, you see indeed in the Headers that no gzip was applied:

And in Wireshark you can see the content of the last chunk in plain text!

So now you CAN (or rather COULD) see, what code was in the delayed frame(s). Could, as in this “real-life-example” the site owner fixed the issue, before I was able to apply the Accept-Encoding: None Header. 🙂

Anyway, two scenarios might have been possible to see:

a) Issue still present -> You can see the causing code piece.

b) Issue gone -> You have a problem with flushing gzip buffers.

or something totally wild like serverside malware, that tried and failed to attach malware code to the bottom of the object… 😉